All things must pass

Yes, AI may soon be smarter than humans. But rather than destroying humanity, could it lead us back to an analogue world?

I am an Elon Musk fan.

You can stop reading now, if you want. But you’d be stupid to. Just why so many people hate Elon Musk is partially a mystery to me. Yes, I perfectly understand that some humans do not like successful people, and that there is a perfectly orchestrated hate campaign going on against the man ever since he took over Twitter (and named it X.). But still - how can you detest someone who is so clearly one of the most intelligent men in the world, a character who comes along only a couple of times in every century?

In 100 years from now, who will the history books remember from the 2000s but Elon Musk? A man who single-handedly has ushered in the era of the electric car, who has shot rockets into space that were more sophisticated by a mile than anything that came before? Who dreamed up Hyperloop 20 years before it invariably is going to become a reality?

During the Covid years, an entire group of people from all over the world, the “Covid realists”, desperately waited for Elon Musk to come out and tell his version of events. Surely this ultra-smart guy would understand the futility of lockdowns, the malarkey of masks, the cruelty of lockdowns? I myself was one of many who pestered Elon every day, tried to send him private messages and refreshed their computer screen for hours trying to be the first to comment on one of his posts, in the faint hope that the man would come out and say something, make it clear to the world he was too smart to buy the bullshit.

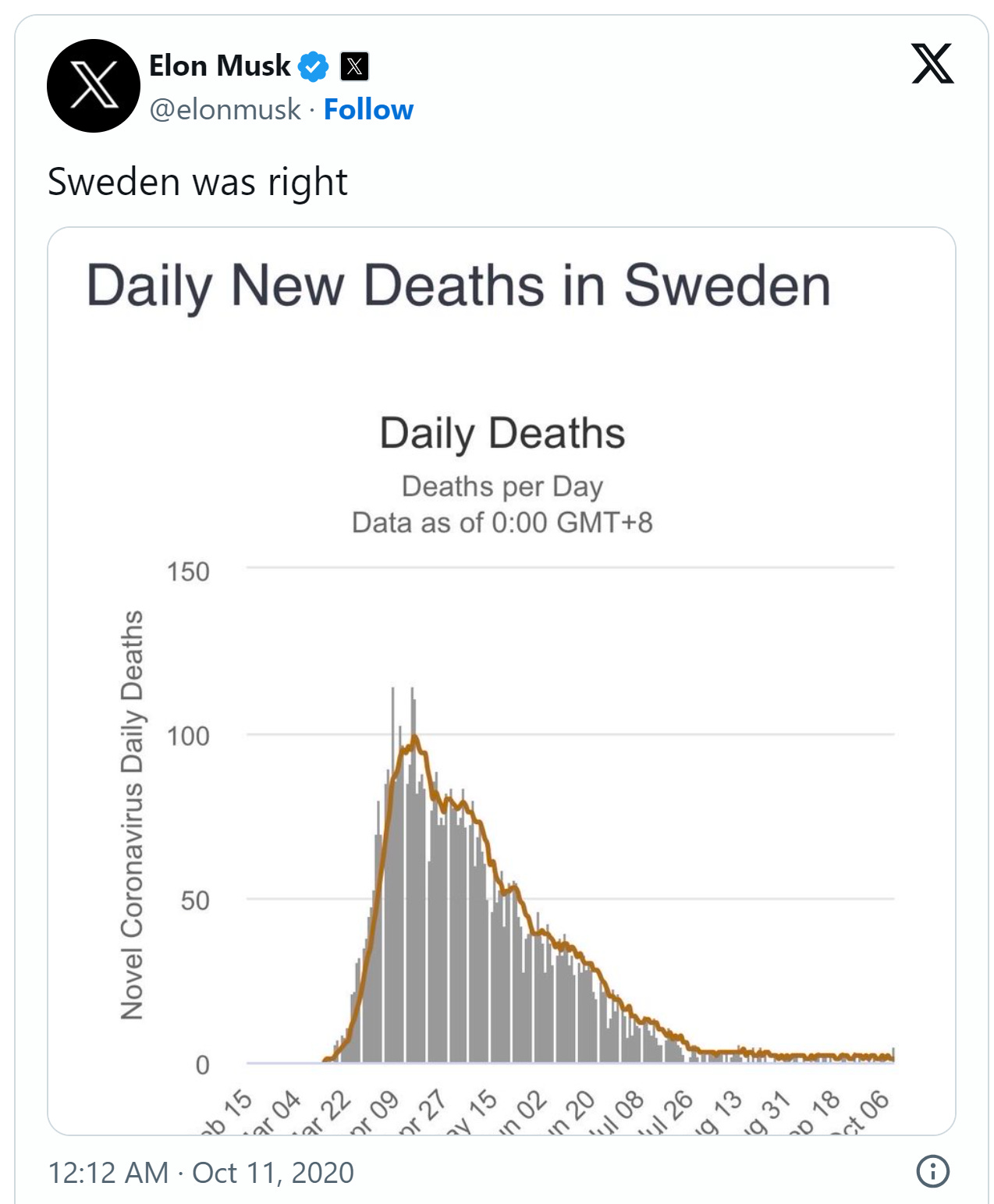

When he eventually did, his post was as short and clear as any he has made, as if the answer was so clear it needed no explanation:

And that was pretty much the end of his involvement in the cause until he published the Twitter files but by that point, the propaganda machine had taken over and the image of Evil Elon was omnipresent in the mass media. I know so many people who hate Elon Musk but when you ask them why, they have no answer.

Anyway, this week, Elon Musk made another statement designed to turn heads.

https://www.theguardian.com/technology/2024/apr/09/elon-musk-predicts-superhuman-ai-will-be-smarter-than-people-next-year

Even the Guardian, first in line in the Elon hate squad, couldn’t help but report Elon’s statement. Will he be right? Looking at historical evidence, he’s hardly ever totally right but the end results still usually exceed what many people thought possible.

On self-driving cars, for example, he may have been too optimistic, their future now being in severe doubt due to safety concerns and legal problems. But still - remember when Tesla was an exotic fancy for the rich and crazy? Look at 2024, and Tesla has become The Car, and almost Volkswagen-like symbol of normality. The Electric Car, not better or worse than the competition, perhaps even cheaper but it works. Rather than propelling the eCar into another dimension, futuristic and out of reach, Elon’s biggest achievement must be for Tesla to become normal, and therefore not the future but the here and now.

https://www.theverge.com/2023/8/23/23837598/tesla-elon-musk-self-driving-false-promises-land-of-the-giants

So what about AI, then. What would it mean if AI really became so intelligent as to be indistinguishable from humans, or worse, smarter than them?

And here comes the twist. I firmly believe that almost everybody who works in or reports on this industry misses the point by a mile. We will not be able to prevent AI created content from being so advanced you won’t be able to tell it from human content.

Already now, when you look for the highlights of a football match on YouTube, often you’ll accidentally click on videos containing footage of the same match being played in a video game. Soon, as AI powered voice emulators achieve near-perfect results, you’ll be unable to tell if what you are watching really happened or was generated by AI. Imagine thousands of companies operating onder false names on YouTube, generating video content of events that never happened and gathering millions of clicks and advertising dollars.

When you chat to your friends on Facebook or any other platform, you’ll need to be careful not to be diverted to AI-powered chat bots who will perfectly mimic your friends’ tone of voice and vocabulary. On Tinder, you may spend hours getting to know a person that never really existed, and there’ll be no way of telling if you are falling into a trap or talking to a real potential partner.

I have been working in the language industry for 20 years and am pretty familiar with how AI engines work. Pretty much like the Machine Translation engines we use, they follow Large Language Models which basically rehash existing content and logically deduce which words and phrases follow each other. On an even more sophisticated level, the modern AI tools use existing data and content to generate new content - new truths. As AI content overtakes, in the coming months probably, the amount of human-generated content on the web, an entirely new truth will emerge - an AI-generated truth based on AI content which once started with what humans wrote down on the web.

Scary though that sounds, it’s quite like what happens in “real life”: we all, as toddlers, begin to learn truths, statements and concepts which have been transcribed by others. We will never really know how correct our established truth is because we cannot ever go back to the first transcription of it. What if arms weren’t arms, people not people but animals, the solar system an invention of some creative soul millions of years ago? We have no way of knowing and perhaps that is what truth is, it’s not an actual piece of wisdom but a version of the world that our group has created, willingly or unwillingly, over centuries.

So if the same happens to AI content, it won’t essentially matter if AI speaks truth but it will become the new version of the world, impossible to reverse.

Imagine if AI had existed in Covid times, fed by governments. It would have spat our article after article of government approved propaganda, just like it happened in reality but ten times as powerful and all-encompassing. It would have been more powerful than 1984’s Ministry of Truth, and we all now wouldn’t be here to question the narrative of what had to be done and what it yielded, and what it was motivated by.

So that is the first of my two possible scenarios as I try to sketch out the future of our world with AI in it. If AI becomes so powerful, then the only way to control it is to hand complete control of the Internet to governments, have them police each and every entry into the web’s database, program their own AI engines to combat AI content they do not like, and create a new truth for us that will not be contestable.

If that reminds you of North Korea, then perhaps we agree this future is not desirable (but likely).

However, I want to throw your way another hypothesis, my second scenario, which I have never read about or heard anyone talk about, anywhere.

If the Internet becomes impossible to trust, if anything you do or read online becomes as likely to be fake as real, then it becomes meaningless. It stops working. And could this be the end of the road for a process that we all deemed unstoppable? The digitization of our society, our online lives?

What do you do if Tinder stops working, if you no longer trust online banking, if the voice from your grandmother on the WhatsApp call may as well be computer generated? There’s only one solution - and that is to go out into the real world (or rather, our “old world”). Will people go on speed dates in bars again because they can then touch the other persons’ arms and verify their presence? Will we be paying in cash or exchanging goods because our cryptocurrency platform could have been replaced by another, same-looking one overnight?

If you think that’s crazy, try to think of a way to stop AI from developing. Network problems? Lack of processing power? Look at the progress in the last three years on these fronts and you’ll know that’s a faint hope. Human-based content verification and moderation? With AI producing a thousand times the amount of content humans would in one day, surely not. Unless we opt for the North Korea scenario above, but even that probably won’t be strong enough to stop an AI content explosion.

So what alternative do we have, other than taking life offline and “opting out” of the parallel universe that is emerging? Not any.

There are hundreds of “post apocalyptic” movies like The Road, which start after an event that has changed the course of humanity irreversibly. These movies are probably so popular because they engage our fantasy, and deal with a reality far from ours - but is it impossible? Could we end up in a “post-computer” world where all, or a part of society have opted out of the new world?

Well, don’t rule it out. We may soon reach the end of a road we thought would go on forever. As the machines become bigger and better than us, will we follow them or step out?

Unless, there is an AI robot somewhere in a laboratory that can build AI robots, who can build 100 AI robots each, who can build 100 AI robots each. If this is the case, we’d be over within a week. Maybe I am not who you think you are, and the world you think you live in is no longer the same. Perhaps you are reading AI-generated content now, created by a machine programmed by a human 100 years ago, and while you are waiting to see if the computers will take over the world, they already have?